All The Tests: PINT’s Overview of Web Testing

At PINT, we are committed to efficiently, effectively, and thoroughly testing our work, whether the product is a simple brochure site or an enterprise B2B or B2C web application. We want to move fast without breaking things. Confidence in product stability is paramount. Robustness is essential.

Delivering a quality product naturally requires an environment where the final product is built from testable and fully tested code, and what needs to be tested is determined by requirements that are gathered at the outset of the project and potentially extended or refined along the way. Getting to that level of testing maturity, however, requires us to overcome some technical and conceptual hurdles.

Testing: Practice & Theory

Recently, a few of our developers gathered for a testing discussion in an attempt to address some of these technical hurdles (in JavaScript specifically) e.g., continuous integration (CI) techniques, which testing libraries to use, test coverage suites available, etc.

In this discussion, we collectively acknowledged two important facts: 1) web testing is not trivial and 2) for someone who has never written tests before, getting started can be incredibly difficult.

To help you get started with testing, we’ve compiled some of the basics in this article, namely, the Types of Tests you’ll want to consider employing and the concept of The Testing Pyramid.

Test Types

Understanding each test’s purpose can be confusing if you’re just getting started. For the definitions, we will be focusing on the common elements that allow us to understand what the test is doing.

Depending on the language design undergoing testing, our sources have noted differences as to what constitutes a unit as in a Unit Test or a component as in a Component Test, etc.

Unit Test

A unit test covers a low-level piece of the code base that is small, is quick to test, and has no side effects. Typically in JavaScript, this involves testing a single function, and you’ll likely have hundreds, if not thousands, of these tests in a single application.

For a well-rounded discussion about the conceptual differences in approach to Unit testing, read here.

Component/Module Tests

A component test has no specific size, but tests the entirety of a logical component or process in the application service layer. In Javascript, we might translate this testing to the scope of several functions, classes, or series of objects in the prototype chain, that ultimately provides for one of several units of an integration test (discussed later).

It is recommended that component tests are done side-by-side with unit tests, but by a different team i.e. the testing team. Of course if you don’t have a testing team, observe the conceptual difference between unit and component tests, and design a plan of action to tackle them accordingly.

API Test

An API test is not too dissimilar from a component test, but the underlying logical component or process is exposed via API in the application service layer. A logical grouping of tests is usually denoted by a single route or endpoint that is exposed in the application.

If all of your components are accessible via API, then all of your Component tests are API tests.

Contract Test

A contract test requires communication with an external service via API or other methods. It is merely a variation of a component or API test, but only insofar as it makes that external service request. Within the test, the service request is likely mocked, so as not to avoid any 3rd party dependencies.

Read more about contract tests on Martin Fowler’s site.

Integration Test

Recently, Martin Fowler provided a concise definition of an integration test with emphasis on implementing narrow integration tests (in contrast to broad sort), as well as a history over the confusion of what this test means.

According to Fowler, “Integration tests determine if independently developed units of software work correctly when they are connected to each other.”

By this definition, we can infer that an integration test properly resolves connected components, or components accessible via API, with each other.

Service Test

A service test is a more concise model that essentially consists of component, integration and API tests. These types of tests are intended to cover the service layers of the application undergoing testing i.e. everything just beneath the hood (UI) of the application.

It has been argued that service tests, while crucial, are largely forgotten in favor of UI and Unit Tests only. As applications have grown, it seems desirable, if not necessary, to be more specific about the types of service tests that exist, and isolate them from each other (at least conceptually, if possible).

This breakdown is discussed in more detail here.

Manual Test

A manual test is simply guided by user intervention on the system undergoing testing and can occur at any time, either before, during, or after the automated testing processes.

In the more verbose pyramids below, the manual testing process is represented as a cloud, hovering above the pyramid deliberately disconnected from it.

(G)UI/Functional/End-To-End (E2E)/BroadStack/Full-Stack/Acceptance Test

This type of test manipulates the application through the user interface i.e. establishes and maintains control of the application through the browser or the website. The various names for a similar type of test have probably led to a lot of confusion. Especially since some of those test names do have variations e.g., a BroadStack test can be limited in scope enough so as to be thought of as an API test.

These types of tests are typically what the stakeholders see as the final product, and as a result, a lot of testers might be instructed to ignore unit and service level tests, in favor of more UI tests (see the “Ice Cream Cone” antipattern below).

Further reading on the subject suggests there is some disagreement on the overall value of UI tests. The Google Testing Blog back in 2015 advocated for limiting (if not completely removing) UI tests altogether:

“As a good first guess, Google often suggests a 70/20/10 split: 70% unit tests, 20% integration tests, and 10% end-to-end tests.”

In response to that article, however, Adrian Sutton of LMAX dismisses Google’s conclusion, suggesting that:

UI tests are “invaluable in the way they free us to try daring things and make sweeping changes, confident that if anything is broken it will be caught.”

Whatever your position on the subject of UI tests, measure your testing needs against the project requirements.

The Testing Pyramid

Conceptually, web testing and testing, in general, hasn’t changed much over the years, though its representation has evolved. As the foundations have withstood the test of time, the language around the concepts has been altered slightly, and additional layers have been extrapolated from some of the more basic layers.

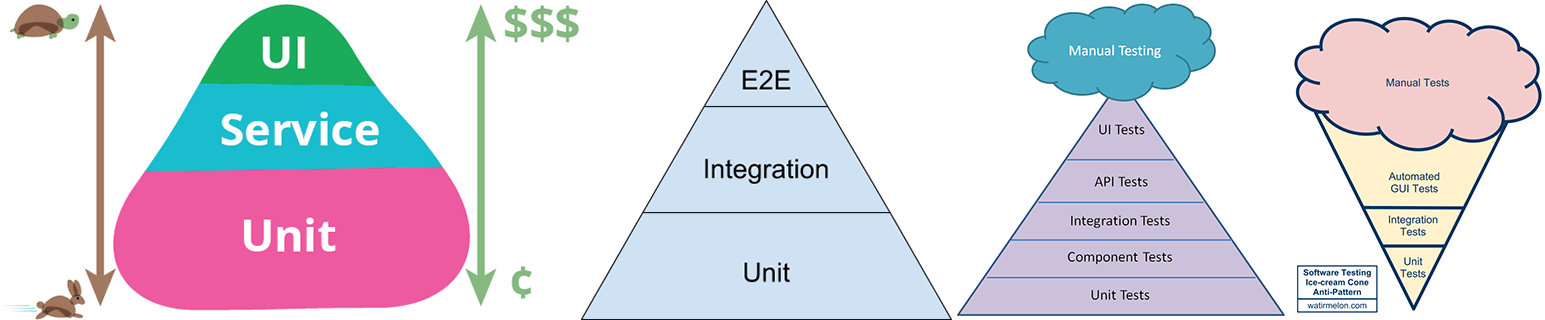

One of the fundamental concepts, the Test Pyramid, which is well-known in the developer community, has undergone several iterations and interpretations:

The intent of the Testing Pyramid has been to visually quantify test coverage by type. Each level of the pyramid is a type of test, and the ordering of the tests is meant to convey the degree to which each type of test relies on the other for certain kinds of test coverage, and thus for an efficient overall distribution of responsibility for minimizing the risk of defects. Ultimately, this reliance of one layer upon the other is more significant and universal than the exact number of layers or the proportions between them.

For example, in the pyramids above, each level is meant to convey proportions of tests per suite. So the suite of Unit Tests, which cover side-effect free business logic, should be substantially larger than the suite of Service or Integration Tests, which test how modules, components or services work together. Similarly, the suite of Service or Integration tests should in turn be larger than the suite of UI tests. If you need more than three types of testing, you should still seek to distribute them in varying proportions depending on type, as in the third pyramid. This is generally the right ideal to aim at, for reasons we’ll see in a moment.

In practice, it’s possible that, in some projects, the nature of what is being built might call for a more even distribution of test types. In that latter case, your project might have proportionately less of the side-effect free business logic that unit tests are good at testing, and more service interactions, integrations, or UI controls, etc. Your ideal pyramid would start to resemble more of a cone. Such adaptations are fine, but you also don’t want to push them too far.

The “Ice Cream Cone,” in the above image, is a representation of an inverted testing pyramid. Alister Scott, the author of the testing pyramid, created this antipattern of testing when he discovered flawed representations of his idea in the real world. It suggests that, without proper attention to detail, organizations tend to have very little unit tests at the base, followed by disproportionate amounts of integration and automated GUI tests, and finally a dollop of manual testing at the top.

It’s important to keep in mind why it makes sense to aim for proportionately more unit tests than, say, UI or End-to-End (E2E) tests.

Looking at the pyramid on the left, the left y-axis (rabbit to turtle) is meant to convey that Unit Tests are faster to create and execute than UI tests, and the right y-axis (cents to dollars) is meant to convey that Unit Tests are less costly (in terms of resources, time, etc.) to write and maintain than UI tests.

Whatever the optimal proportions for a given project, it’s crucial to understand that the higher up you go in the pyramid, the costlier things get. This understanding, if applied early enough in the project, might even help you structure the code itself for maximum testability.

Thus, the key thing about the different pyramid levels and their relative sizes is that they imply an inter-dependency in terms of test efficiency relative to project risk. The more Unit Tests that are written, the more code is covered before you start writing Service Tests. This means that a substantial portion of that code is already tested. So too with UI tests, by the time you write those, Unit and Service tests will have hit most of the code underlying the UI as well.

The pyramid, in effect, visualizes the idea that overall test coverage should be built up using a kind of division of responsibility, in which each distinct type of testing focuses on the type of risk it is best suited for managing, relying on the others to do likewise.

This matters for a couple of reasons:

First, it keeps the more time-consuming and expensive tests focused on areas of project risk that simply can’t be covered by faster and cheaper methods. Sometimes you really do need to employ a more costly tool to get the job done. There’s nothing wrong with that, provided you employ it when and where it’s truly needed, rather than using it indiscriminately just because it is in your arsenal.

Second, if you can catch a bug in a finer-grained test, finding the root cause and fixing it will often be far easier than if that same bug has to bubble all the way up to a higher-level test before it sets off any alarms. The key issue in both cases is one of efficiently managing the risk of a defect getting through undetected.

Always Be Testing

Gathering a project’s requirements, generating a complete and consistent specification, and growing the prototypes from the requirements and specifications into a full blown website or web application is quite an undertaking.

When executed correctly, it allows for a sense of accomplishment that a web agency can thrive on, especially if the client and stakeholders are satisfied.

But all of that is inconsequential if you have zero tests backing up that deliverable.

Writing the tests is not enough. Understanding why tests are written, what they are being written for, and how they are written can be problematic given the history of web testing. But tests are essential for having unwavering confidence in the stability of the product, especially if you want to deliver quality, consistently.

So we need to always be testing.

Additional Resources

In addition to what we’re covering here, this technical article does a great job consolidating testing resources and could well be considered one of the most useful, comprehensive guides available for modern web testing for JavaScript right now. We won’t be making any recommendations (be wary of the author’s) as far as what to use or how to use it, but we do implore you to read it with a critical mind.

For thoroughness, Google detailed another approach to testing back in 2010. It aims to simplify how these tests are understood and written, but it hasn’t seemed to gain any traction. The table of tests is provided for brevity, as we will not be covering the approach in detail.

| Feature | Small | Medium | Large |

| Network access | No | localhost only | Yes |

| Database | No | Yes | Yes |

| File system access | No | Yes | Yes |

| Use external systems | No | Discouraged | Yes |

| Multiple threads | No | Yes | Yes |

| Sleep statements | No | Yes | Yes |

| System properties | No | Yes | Yes |

| Time limit (seconds) | 60 | 300 | 900+ |

Assess your team and your project’s needs, and remember that, technically, most of the solutions in that article essentially do the same thing. Ultimately, you’ll want a solution that allows you to efficiently and effectively test your application with speed and ease.

Sources: